Of Lost Sheep and Smart Models: How AI Opens Up New Perspectives on Cultural Texts

The workshop on “Computational Linguistics for Cultural Heritage, Social Sciences, Humanities, and Literature” focused on the question of how modern language processing—particularly large language models (LLMs)—can be used to analyze cultural, historical, and literary data. The presentations reflected an impressive thematic and linguistic diversity, ranging from historical text corpora and literary analyses to socially relevant issues such as disinformation and linguistic diversity.

Diverse Program Spanning Theory and Application

The program for the in-person event on March 29 included several sessions on topics such as diachronic language data, identity, information extraction, and literary language. This was complemented by an invited lecture by Dr. Barbara McGillivray, Senior Lecturer in Digital and Computational Humanities at King’s College London, who shed light on methodological perspectives on LLMs in the humanities.

Particularly noteworthy is the breadth of methodological approaches: In addition to classic natural language processing (NLP) techniques, generative models and hybrid methods were increasingly employed, for example, for annotating historical texts, analyzing propaganda, or investigating literary stylistics.

Poster Session as a Catalyst

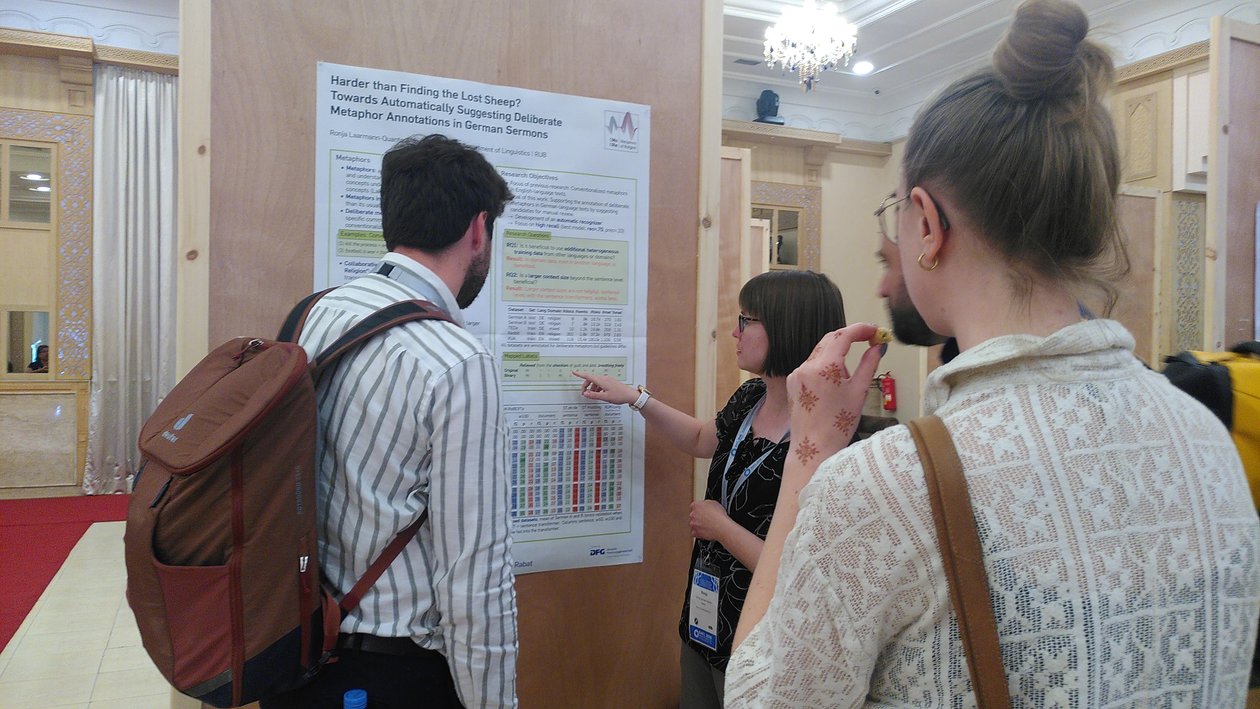

A central part of the program was the poster session, which provided a space for exchange on ongoing projects and new ideas. Stefanie Dipper and Ronja Laarmann-Quante also presented here on the topic “Harder than Finding the Lost Sheep? Towards Automatically Suggesting Deliberate Metaphor Annotations in German Sermons.” The work addresses the automatic identification and annotation of deliberate metaphors in German-language sermons—a challenging problem at the intersection of linguistics, hermeneutics, and machine learning. The presentation illustrates how computational methods can be used to support complex interpretive tasks in the humanities.

In addition to this presentation, the poster session offered a wide range of topics, such as authorship analysis, stylometry, language variation, historical corpora, and the modeling of scientific concepts.

Overall, the SIGHUM (LaTeCH-CLfL) 2026 workshop highlighted how dynamically the field of Computational Humanities is evolving. In particular, the integration of Large Language Models opens up new perspectives for the analysis of cultural and historical datasets. At the same time, it became clear that the combination of technological innovation and humanities expertise remains central to the field’s progress.